Three Ways to Get Predictions from Your Model Without Coding

Feb 26, 2026

Three Ways to Get Predictions from Your Model

You trained a model. Great. Now what?

Most ML platforms stop here and hand you a pickled model file or a Docker image. You're on your own for the hard part: getting predictions back into your product.

This means setting up inference pipelines, configuring Kubernetes, managing model serving infrastructure, and handling versioning. The "inference tax" burns weeks before your first production prediction.

We built Impulse to eliminate this. Every model you train gets three inference methods automatically—all hosted on our infrastructure. No K8s. No Docker. No ops.

Here's how it works.

Method 1: In-App Testing

After training completes, your model card has a "Make Prediction" button. Enter your feature values directly in the UI, hit predict, get results instantly.

This is for quick sanity checks. Test edge cases. Validate the model works before you integrate it into your product. Not production-ready, but fast and easy for development.

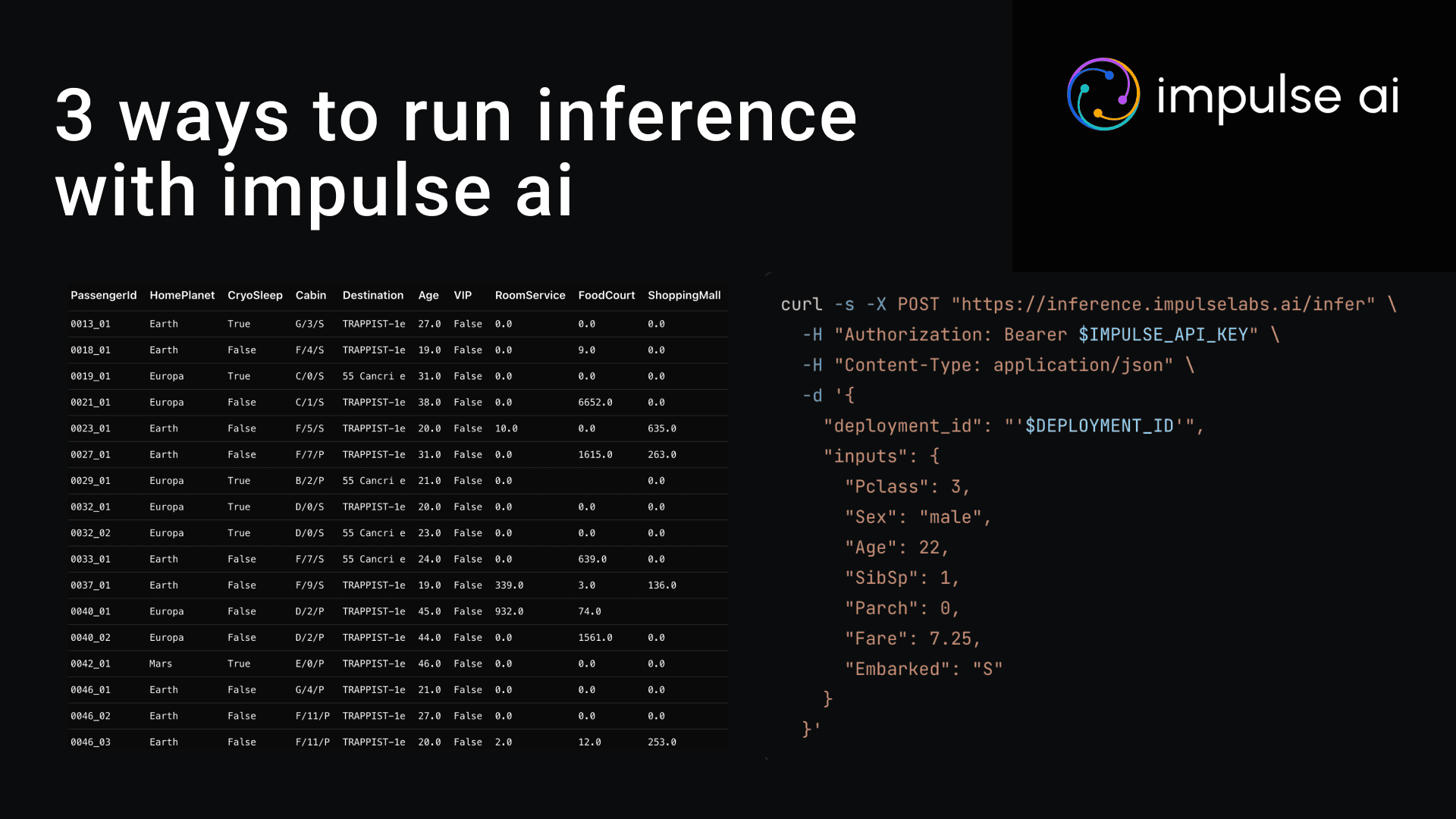

Method 2: Batch Processing

Upload a CSV or Excel file. Get back predictions for every row.

Here's an example from our Spaceship Titanic dataset. We wanted to predict if each passenger was transported. Upload the CSV with passenger features, download the same file with a new column showing predictions. 1 means transported, 0 means not.

No scripting. No batch job configuration. Just upload and download. This is how you score thousands of records without writing inference code.

Method 3: Production API

Every trained model gets a production API endpoint automatically. We host the model. You make HTTP requests.

Send a POST request with your features as JSON. Get back a prediction and probability. The probability tells you how confident the model is—0.88 means 88% confident.

What we handle: Model hosting, autoscaling from 10 to 10,000 requests per minute, load balancing, cold start elimination, uptime, monitoring.

What you handle: Making HTTP requests from your code.

Standard REST API. Works with any tech stack.

Why This Matters

Traditional ML deployment: Train model, export file, write Flask wrapper, containerize with Docker, deploy to Kubernetes, configure autoscaling, set up monitoring, debug production issues. Two weeks later, maybe it works.

Impulse deployment: Train model, copy API endpoint, make predictions.

The model you trained is already live and serving predictions. No deployment step exists.

You're not a DevOps engineer. You're building a product.

We built Impulse so you never have to think about model serving infrastructure. Train the model, get three inference methods automatically, ship features.

About Impulse AI

Impulse AI is building an autonomous machine learning engineer that turns data into production models from a simple prompt. Founded in 2025 and based in California, the company enables teams to build, deploy, and monitor expert-level ML models without code or specialized ML expertise. For more information, visit https://www.impulselabs.ai.